|

|

Post by lowell on Nov 4, 2015 17:24:52 GMT -6

Why does it keep repeating it's self? There are so many stars it would seem it would be a relatively common accourrence. If you mean why does the fusion reaction in the Sun continue, not all the hydrogen is fused into helium at once. Scientists were concerned about how much hydrogen is left, because if the majority of it has been fused into helium, the Sun might be near the end of its typical light emitting phase of existence. They worried that it might be close to the time when it will nova. So they attempted to measure the amount of neutrinos being emitted by the Sun. This was considered to be the best way to measure how much hydrogen and helium are in the Sun. Yes there are many many stars that are nearly identical to the Sun. |

|

orogenicman

RMem

Old enough to remember how to make stone tools

Posts: 189

|

Post by orogenicman on Nov 5, 2015 0:05:43 GMT -6

Magnetism is what you see when the electric field is moving compared to you. Induction is the other side of that coin- When magnetism is moving compared to you, you also see an electric field.

As for the sun, here is an explanation:

physics.stackexchange.com/questions/130209/how-can-it-be-that-the-sun-emits-more-than-a-black-body

The total radiative power emitted by the Sun is equivalent to the total radiative power emitted by an ideal black body with a temperature of 5778 K and a surface area equal to that of the Sun. This 5778 K is the Sun's effective temperature. The spectrum of the Sun is very close to that of a 5778 K black body, but there are deviations. Some are due to absorption and emission, but others result from three key items:

•There is no such thing as black body. The concept of a black body is an idealization based on some simplifying assumptions. The Sun doesn't exactly satisfy those simplifying assumptions.

•That effective temperature of 5778 K is based on total radiative power, the area under the curve of the Planck distribution. If the spectrum of sunlight falls short of the 5778 K black body spectrum some wavelengths it must necessarily rise above the 5778 K black body spectrum at others.

•The primary reason the Sun fails to satisfy the assumptions that underly the Planck distribution is that we are seeing light from multiple temperature sources. The rest of this answer goes into this in detail

The Sun is not a solid body. It doesn't have a surface from which the radiation originates. The radiation we see from the Sun comes primarily from the Sun's photosphere, a roughly 500 kilometer thick layer near the top of the Sun. The chromosphere, transition region, and corona are above the photosphere. While these higher layers do make solar radiation deviate from the ideal black body curve, the primary source is the photosphere itself.

The amount of light that is transmitted into empty space is a sharply increasing function of distance from the center. However, it is not a delta distribution. The light that does get through from those deeper layers has a higher temperature than the layers above it. The bulk of the radiation we see from the Sun comes from a ~500 km thick layer called the photosphere. The top of the photosphere has a temperature of about 4400 K and has a pressure of about 86.8 pascals. The bottom has a temperature of about 6000 K and a pressure of about 12500 pascals.

What we see is a blend of the radiation from throughout the photosphere. Some of the light comes from the top of the photosphere, some from the middle, some from the bottom, roughly weighted by pressure. The total spectrum looks close to that of a 5778 K black body, but the contribution from the bottommost part of the photosphere tilts the spectrum away from the ideal a bit, making the a tiny bit heavy for shorter wavelength radiation.

Why does it keep repeating it's self? There are so many stars it would seem it would be a relatively common accourrence. Why does what keep repeating itself? I don't understand the question. |

|

|

|

Post by lowell on Nov 5, 2015 0:25:05 GMT -6

'In the late 1940s, Feynman, using his best safecracking techniques, doodled on smore poops of paper tracing pictorially what happened when electrons collided with one another. Since each doodle was actually a shorthand notation for a tremendous amount of tedious mathematics, Feynman was able to condense hundreds of pages of algebra, and isolate the troublesome infinities. These mathematical doodlings allowed him to see farther than those who were lost in a jungle of complex mathematics.

Not surprisingly, "Feynman diagrams" were a source of controversy within the physics community, which was split on how to deal with them. Because Feynman could not derive his rules, his critics thought that these diagrams were silly or perhaps just another of his famous practical jokes. Some of his critics preferred another version of QED being formulated by Julian Schwinger of Harvard University and Shinichiro Tomonaga of Tokyo. However, the more perceptive physicists realized that Feynman was on to something potentially profound with these pictures. Princeton physicist Freeman Dyson explained the source of this confusion:

The reason Dick's physics was so hard for ordinary people to grasp was that he did not use equations. The usual way theoretical physics was done since the time of Newton was to begin by writing down some equations and then to work hard calculating solutions of the equations - Dick just wrote down the solutions out of his head without ever writing down the equations. He had a physical picture of the way things happen, and the picture gave him the solutions directly with a minimum of calculation. It was no wonder that people who had spent their lives solving equations were baffled by him. Their minds were analytical, his was pictorial.

|

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Nov 5, 2015 5:34:47 GMT -6

Why does it keep repeating it's self? There are so many stars it would seem it would be a relatively common accourrence. Why does what keep repeating itself? I don't understand the question. Okay, all of the complicated processes going on in the sun would seem like a relatively simple thing seeing there are millions of stars. It may take a lot of time to form a star but the process in forming one can't be too complex are there wouldn't be so many. |

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Nov 5, 2015 5:37:23 GMT -6

That may be a little off the topic but that's my observation, more of a question really.

|

|

orogenicman

RMem

Old enough to remember how to make stone tools

Posts: 189

|

Post by orogenicman on Nov 5, 2015 11:37:17 GMT -6

Why does what keep repeating itself? I don't understand the question. Okay, all of the complicated processes going on in the sun would seem like a relatively simple thing seeing there are millions of stars. It may take a lot of time to form a star but the process in forming one can't be too complex are there wouldn't be so many. Occum's razor applies, as always in science. Having said that, Einstein amended it by saying that it only needs to be simple enough to provide a reasonable, but testable explanation. As such, despite the abundance of stars in the universe, the latter is still mostly empty space. So that would suggest not only a measure of complexity, but also a dearth of building materials. |

|

|

|

Post by lowell on Nov 5, 2015 13:47:06 GMT -6

Even in some of the empty space, there is the Higg's field. Or at least that is how I understand it. The Higg's field has been shown to be a probable reality with the evidence of the Higg's boson created at the CERN Large Hadron Collider.

|

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Nov 5, 2015 13:47:25 GMT -6

A lot more too it then I thought.

|

|

|

|

Post by lowell on Nov 7, 2015 3:00:47 GMT -6

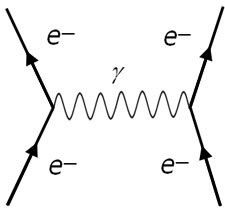

There are primarily two types of Feynnman diagrams. There are those that loop and those that resemble trees. Feynman found that the trees were finite and yielded experimentally good results. But the loops were trouble. They resulted in infinities. They resulted in infinities because the theory still used point particles.  Here in a tree diagram, a virtual photon is exchanged between two electrons causing them to repel.  Here in a loop diagram there are many photons exchanged. Feynam used another aspect of Maxwell's equations called "gauge symmetry" to redefine the charge and mass of the electrons and regroup the diagrams into large sets, until the infinities canceled each other out. The sets of diagrams absorbed the infinities or "renormalized". The physicist Dirac thought it was like a cardshark reshuffling the deck until the cards with the infinities mysteriously disappeared. "However, the experimental results were undeniable. In the 1950's Feynman's new theory of renormalization (which provided a way to absorb the infinities) allowed physicists to calculate with incredible precision the energy levels of the hydrogen atom. Although the theory works only for electrons and photons (and not for weak, strong, or gravitational forces), it was undeniably a stunning success. After it was demonstrated that Feynman's version was equivalent to Schwinger's and Tomonaga's, the three shared the Nobel Prize in 1965 for eliminating the infinities from QED. In hindsight, we realize that the real accomplishment was their exploiting Maxwell's gauge symmetry, which is crucially responsible for the seemingly miraculous cancellations of infinities in QED. These powerful symmetries are the reason why the superstring, which has the largest set of symmetries ever found in physics, has such wondrous properties."  Scatterings of two closed strings, tree level (left) and one loop (right). Each closed string loop picks up a factor g2s. (This figure is taken from \cite{Hanada:2012eg}.)  A given diagram represents a well-defined integral of dimension 6L-2N-6, where L is the genus of the world sheet, and N is the number of punctures, meaning the number of incoming and outgoing particles. The integral contains no divergences, despite containing the spin-2 graviton. String and supersymmetry contributions are responsible for amazing cancellations. Type I superstring theory contains both open and closed strings, so the perturbation expansion is more complicated. Various world sheet Feynman diagrams at a given order have to be combined to cancel divergences and anomalies. |

|

|

|

Post by lowell on Nov 13, 2015 14:17:24 GMT -6

'The 1950s and 1960s were confusing decades marked by false starts. Feynman's rules were not enough to renormalize the strong and weak interactions. Physicists, not realizing the importance of gauge symmetry, explored hundreds of blind alleys without success.

Finally, after two decades of chaos, the key breakthrough was made in the weak interactions. For the first time in almost one hundred years, since the time of Maxell, the forces of nature took another step toward unification. Once again, the key to the puzzle would be gauge symmetries.

Weak interactions concern the behavior of electrons and their partners, called "neutrinos". (Weakly interacting particles are collectively called "leptons.") Of all the particles in the universe, the neutrino is perhaps the most curious, because it is by far the most elusive. It has no charge, probably has no mass, and is exceedingly hard to detect.

It was predicted by Wolfgang Pauli on purely theoretical grounds in 1930 to explain the strange loss of energy found in radioactive decay. Pauli conjectured that the missing energy was carried off by a new particle that could not be seen in the experiments.

In 1933, Enrico Fermi published the first comprehensive theory of this elusive particle, calling it the "neutrino" ("little neutral one" in Italian). However, because the entire idea of the neutrino was so speculative, his paper originally was rejected for publication by the British journal Nature.

Neutrino experiments were notoriously difficult because neutrinos are very penetrating and leave no traces of their presence. In fact, they can easily penetrate through the earth. Every second our bodies are riddled with neutrinos that entered the earth through China, penetrated the earth's core, and came up through the floor. In fact, if our entire solar system were filled with solid lead, some neutrinos would be able to penetrate even that formidable barrier.

The neutrino's existence was finally confirmed in 1953 in a difficult experiment that involved studying the enormous radiation created by a nuclear reactor.

In addition to the neutrino, the mystery of the weak interactions deepened with the discovery of other weakly interacting particles, such as the "muon". Back in 1937, when this particle was discovered in cosmic ray photographs, it looked just like an electron Physicists were disturbed that the electron seemed to have a useless twin, except heavier. Why did nature create a carbon copy of the electron? Wasn't one enough? Columbia physicist and Nobel laureate Isidor Isaac Rabi, when told of the discovery of this redundant particle, exclaimed "Who ordered that?"

To make matters worse, physicists in 1962, using the atom smasher in Brookhaven, Long Island, showed that the muon, too, had its own distinct partner, muon neutrino. In 1977-78 experiments at Stanford University and in Hamburg, Germany, showed that there was yet another redundant electron, this time weighing in at thirty-five hundred times the electron mass It was dubbed the "tau" particle, with its own separate partner, the tau neutrino. Now there were three types of electrons, each with its own neutrino, each identical to the electron family except for mass. Physicists' faith in the simplicity of nature was shaken by the existence of three redundant pairs or "families" of leptons.

Faced with the problem of weak interactions, physicists used a time-honored technique: applying analogies stolen from previous theories to create new theories. The essence of QED explained the force between electrons as the exchange of photons. By the same reasoning, physicists conjectured that the force between electrons and neutrinos was caused by the exchange of a new set of particles called W-particles (w for "weak").

The problem, however, was that the theory was nonrenormalizable. No matter how cleverly Feynman's bag of tricks was used, the theory still was plagued with infinities. The problem was that the W-particle theory itself had a fundamental sickness - it had no gauge symmetries, as in Maxwell's equations.

As a consequence, the theory of weak interactions languished for three decades. Not only were experiments difficult to perform (because of the notoriously elusive neutrino), but the W-particle theory was unacceptable. Physicists puttered with the theory over the decades, but no significant breakthroughs were made.

|

|

|

|

Post by lowell on Nov 18, 2015 1:58:40 GMT -6

'In 1967-68, Steven Weinberg, Abdus Salam, and Sheldon Glashow noticed the amazing similarity between the photon and the W-particle. Then they made the following observation; Although Einstein had tried to unite light with the gravitational force, perhaps the correct unification scheme was to unite the photon with the W-particle of weak interactions. This new W-particle theory, called the electro-weak theory, differed decisively from previous W-particle theories because it used the most sophisticated form of gauge symmetry available at that time, the Yang-Mills theory. This theory, formulated in 1954, possessed more symmetries than Maxwell ever dreamed of.

The Yang-Mils theory contained a new mathematical symmetry (represented mathematically as SU(2) X U(1)) that allowed Weinberg and Salam to unite the weak and electromagnetic forces on the same footing. This theory also treated the electron and the neutrino symmetrically as one "family." As far as the theory was concerned, the electron and the neutrino were actually two sides of the same coin. (The theory did not, however, explain why there were three redundant electron families.)

Although the theory was the most ambitious and advanced theory of its time, it raised few eyebrows. Physicists assume that it was probably nonrenormalizable, like all the other dead ends, and therefore riddled with infinities.

Weinberg, in his original paper, speculated that the Yang-Mills version of the W-particle theory was probably renormalizable, but no one believed him. However, all this changed in 1971

After three decades of agonizing over the infinities festering within the W-particle theory, a dramatic breakthrough was made when a twenty-four-year-old Dutch graduate student, Gerard 't Hooft, proved that the Yang-Mills theory was renormalizable. To double-check his calculation showing the cancellation of infinities, 't Hooft placed the calculation on computer. One can imagine the excitement that 't Hooft must have felt while awaiting the results of his calculation. He later recalled. "The results of that test were available by July 1971,; the output of the program was an uninterrupted string of zeros. Every infinity canceled exactly."

Within months, hundreds of physicists rushed to learn the techniques of 't Hooft and the theory of Weinberg and Salam. For the first time, real numbers, not infinities, poured out of the theory for the S-matrix. Earlier, from 1968 to 1970, not a single paper published by a physicist referred to Weinberg and Salam's theory. By 1973, however, when the impact of their results was being appreciated, 162 papers on their theory were published.

Somehow, in ways that physicists still don't completely understand, the symmetries built in to the Yang-Mills theory eliminated the infinities that had plagued the earlier W-particle theory. Here was the stunning interplay between symmetry and renormalization. It was also a replay of the discovery made by physicists studying QED years earlier - that symmetries somehow canceled the divergences in a quantum field theory. '

|

|

|

|

Post by lowell on Nov 25, 2015 6:35:01 GMT -6

"Glashow, in his 1979 Nobel Prize acceptance speech for the electro-weak theory, summed up the tremendous excitement of seeing the unification of subatomic forces emerge before his eyes:

'In 1956, when I began doing theoretical physics, the study of elementary particles was like a patchwork quilt. Electrodynamics, weak interactions, and strong interactions were clearly separate disciplines, separately taught and separately studied. There was no coherent theory that described them all. Things have changed. Today we have what has been called a standard theory of elementary physics, in which strong, weak, and electromagnetic interactions all arise from a [single] principle... The theory we now have is an integral work of art; the patchwork quilt has become a tapestry.'

Physicists, already dizzy from the monumental success of the eletro-weak theory, turned their attention to solving the strong force.

Gauge symmetry had canceled the divergences of QED and the electro-weak theory. Was gauge theory also the key to canceling the infinities of the strong interactions? The answer was yes, but only after a considerable amount of confusion that lasted for decades.

The origins of strong interaction theory date back to 1935, when Japanese physicist Hideki Yukawa by analogy proposed that protons and neutrons were held together in the nucleus by a new force created by the exchange of particles called 'pi mesons.' Just as in QED, where the exchange of photons between the electron and the nucleus held the atom together, Yukawa by analogy proposed that the exchange of the mesons held the nucleus together. He even predicted the mass of these hypothetical particles.

Yukawa was the first to argue that the short-range forces in nature could be explained by the exchange of massive particles. In fact, Yukawa's meson idea provided the original inspiration for other physicists to propose the W-particle a few years later as the carrier for the weak force.

In 1947, English physicist Cecil Powell discovered the meson in his cosmic ray experiments. The particle had a mass very close to that predicted by Yukawa twelve years earlier. For this pioneering work, in unraveling the mysteries of the strong force, Yukawa was awarded the Nobel Prize in 1949, and Powell received the prize the following year.

Although this meson theory met with considerable success (and was renormalizable as well), it was not by any means the final word. In the 1950s and 1960s, physicists using atom smashers in laboratories across the country were discovering hundreds of different types of strongly interacting particles, now called 'hadrons' (which include both the mesons and other strongly interacting particles such as the proton and the neutron).

The existence of hundreds of hadrons was an embarrassment of riches. No one could explain why nature suddenly became more, not less, complicated as scientists probed the subnuclear realm. Everything seemed so simple by contrast in the 1930s when it was thought that the universe was built from just four particles and two forces (the electron , proton, neutron, neutrino, and light and gravity). By definition, elementary particles should be few in number, but physicists in the 1950s were flooded with new hadrons discovered in the nation's laboratories. Obviously, a new theory was required to make some sense out if this chaos."

|

|

|

|

Post by lowell on Dec 3, 2015 21:19:05 GMT -6

'Nobel laureate Enrico Fermi, observing the plethora of new hadrons, each one with a strange-sounding Greek name, once lamented, “If I could remember the names of all these particles, I would have been a botanist.”

J. Robert Oppenheimer said in jest that the Nobel Prize should be given to the physicist who didn't discover a new particle that year.

By 1958, the number of strongly interacting particles had grown so rapidly that physicists at the University of California at Berkeley published an almanac to keep track of them. The first almanac was nineteen pages long and categorized sixteen particles. By 1960, there were so many particles that a considerably expanded almanac, including a wallet card, was published. By 1995, the list was expanded to over 2,000 pages, describing hundreds of particles.

The Yukawa theory, although renormalizable, was still too primitive to explain the particle zoo emerging from the laboratories. Apparently, renormalizability was not enough.

In the 1950's the first crucial observation was made by a group of physicists in Japan, whose most vocal spokesman was Shoichi Sakata of Nagoya University. The Sakata group, citing the philosophical works of Hegel and Engels, claimed that there should be a sublayer beneath the hadrons consisting of even smaller subnuclear particles. Sakata claimed that the hadrons should consist of three of these particles and that the meson should consist of two of these particles. His group even proposed that these subparticles obeyed a new type of symmetry, called SU(3), which describes the mathematical way in which these three subnuclear particles could be shuffled. This mathematical symmetry, SU(3), allowed Sakata and his group to make precise mathematical predictions about the layer beneath the hadrons.

The Sakata school argued on philosophical and mathematical grounds that matter should consist of an infinite set of sublayers. This is sometimes called the worlds within worlds or onion theory. According to dialectical materialism, each layer of physical reality is created by the interaction of poles. For example, the interaction between the stars creates the galaxies. The interaction between the planets and the sun creates the solar system. The interaction between the atoms creates the molecules. The interaction between the electron and the nucleus creates the atom. And finally the interaction between the proton and the neutron creates the nucleus.

The next breakthrough for the belief that a sublayer existed beneath the hadrons came in the early 1960s, when Murray Gell-Mann of the California Institute of Technology and Israeli physicist Yuval Neeman showed that these hundreds of hadrons occurred in patterns of eight, much like Mendeleev's periodic chart. Gell-Mann even whimsically called this mathematical theory the Eightfold Way, the name of the Buddhist doctrine describing the path to wisdom. (He meant the title as a “colossal joke.”) By looking for “holes” in his Eightfold Way chart, Gell-Mann – like Mendeleev before him – could predict the existence and even the properties of particles that hadn't yet been discovered.

Later, Gell-Mann and George Zweig proposed the complete theory. They discovered that the Eightfold Way arises because of the existence of subnuclear particles (which Gell-Mann dubbed “Quarks” after James Joyce's Finnegn's Wake) These particles obeyed the symmetry SU(3), which the Sakata school had pioneered years earlier.

Gell-Mann found that by taking simple combinations of these quarks, he could miraculously explain the hundreds of particles found in the laboratories and more importantly, predict the existence of new ones. (Gell-Mann's theory, although resembling Sakata's in many ways, used a slightly different set of combinations from Sakata's thereby correcting a small but important mistake in the Sakata theory.) In fact, by properly combining these three quarks, Gell-Mann was able to describe virtually all the particles emerging in the laboratories. For his contributions to strong interaction physics, Gell-Mann received the Nobel Prize in 1969.'

|

|

|

|

Post by lowell on Dec 20, 2015 13:16:40 GMT -6

'In July 1994, physicists held up their champagne glasses in laboratories around the world. The elusive "top quark" finally had been discovered. Physicists at the Fermi National Laboratory outside Chicago could hardly contain their excitement when the press releases were handed out.

What made the top quark significant was that it was the last quark necessary to complete the "Standard Model" - the current and most successful theory of particle interactions. To a particle physicist, it was the last and crowning achievement of a half century of painstaking effort to decode the mysteries of the subatomic world. One chapter in particle physics had been closed. A new chapter in physics was dawning.

Nobel laureate Steve Weinberg said, "There was tremendous theoretical expectation that the top quark is there. A lot of us would have been embarrassed if it were not."

To snare the top quark, the particle accelerator at the Fermi lab, called the Tevatron, created two highly energetic beams of subatomic particles whipping around a large, circular tube, but traveling in opposite directions. The first beam consisted of ordinary protons. The other beam, circulating in the opposite direction and below the first beam, consisted of antiprotons (the antimatter twin of the proton that carries a negative electrical charge). The Tevatron then merged these two circulating beams, smashing the protons into the antiprotons at energies of almost 2 trillion electron volts. The colossal energy released by this sudden collision released a torrent of subatomic debris

Using a battery of complex automatic cameras and computers, physicists then analyzed the debris from over a trillion photographs. To the unaided eye, these pictures look like a spiderweb, with long, curved fibers emanating from a single point To the trained eye, these fibers represented the tracks of subatomic particles blasted out from the collision. Teams of physicists then labored over the data, sifting the photographs until just twelve collisions were selected that had the "fingerprint" of a top quark collision.

The physicists then estimated that the top quark had a mass of 174 billion electron volts, making it the heaviest elementary particle ever discovered. In fact, it is so heavy that it is nearly as massive as a gold atom (which contains 197 neutrons and protons. By contrast, the bottom quark has a mass of 5 billion electron volts.

|

|

|

|

Post by lowell on Feb 29, 2016 12:31:39 GMT -6

Understanding higher dimensions.

"In science fiction novels, a trip into higher dimensions resembles a journey into a strange but earthlike world. In these novels, people are similar to ourselves, but with some twist. This common misconception is due to the fact that the imaginations of science fiction writers are too limited to grasp the true features of higher-dimensional universes given to us by rigorous mathematics. Science is truly stranger than science fiction.

The simplest way to understand higher-dimensional universes is to study lower-dimensional universes. The first writer to undertake this task in the form of a popular novel was Edwin A. Abbott, a Shakespearean scholar who in 1884 wrote Flatland, a Victorian satire about the curious habits of people who live in two spatial dimensions.

Imagine the people of Flatland living, say, on the surface of a table. This tale is narrated by the pompous Mr. A. Square, who tells us of a world populated by people who are geometric objects. In this stratified world, the women are Straight Lines, workers and soldiers are Triangles, professional men and gentlemen (like himself) are Squares, and the nobility are Pentagons, Hexagons, and Polygons. The more sides on a person, the higher his social rank. Some noblemen have so many sides that they eventually become Circles, which is the highest rank of all.

Mr. Square, a man of considerable social rank, is content to live in the pampered tranquility of this ordered society until one day strange beings from Spaceland (a three-dimensional world) appear before him and introduce him to the wonders of another dimension.

When the people of Spaceland look at Flatlanders, they can see inside their bodies and view their internal organs. This means that the people of Spaceland, in principle, can perform surgery on the people of Flatland without cutting their skin.

What happens when higher-dimensional beings enter a lower-dimensional universe? When the mysterious Lord Sphere of Spaceland enters Flatland, Mr. Square can only see circles of ever-increasing size penetrate his universe. Mr. Square cannot visualize Lord Sphere in his entirety, only cross-sections of his shape.

Lord Sphere even invites Mr. Square to visit Spaceland, which involves a harrowing journey where Mr. Square is peeled off his Flatland world and deposited in the forbidden third dimension, his eyes can see only two-dimensionsal cross-sections of the three-dimensional Spaceland. When Mr. Square meets a Cube, he sees it as a wondrous object that appears as a square within a square that constantly changes shape as he looks at it.

Mr. Square is so shaken by his encounter with the Spacelanders that he decides to tell his fellow Flatlanders of his remarkable journey. His tale, which might upset the ordered society of Flatland, is perceived as seditious by the authorities, and he is arrested and brought before the Council. At his trial, he tries, in vain, to explain the third dimension. To the Polygons and the Circles, he tries to explain the three-dimensional Sphere, the Cube, and the world of Spaceland.

Mr. Square is sentenced to perpetual imprisonment in jail (which consists of a line drawn around him) and lives out his life as a martyr. (Ironically, all Mr. Square has to do is “jump” out of the prison into the third dimension, but this is beyond his comprehension.

Mr. Abbott, a theologian and headmaster of the City of London School, wrote Flatland as a political satire on the Victorian hypocrisy he saw around him. However, one hundred years after he wrote Flatland, the superstring theory requires physicists to think seriously of what a higher-dimensional universe might look like.

First of all, a ten-dimensional being looking down on our universe could see all of our internal organs and could even perform surgery on us without cutting our skin. This idea of reaching into a solid object without breaking the outer surface seems absurd to us only because our minds are limited when considering higher dimensions, just like the minds of the Polygons on the Council.

Second, if these ten-dimensional beings reached into our universe and poked a finger into our homes, we would see only a sphere of flesh, hovering in midair.

Third, if these ten-dimensional beings grabbed someone who was in jail and deposited him elsewhere, we would see that person mysteriously vanish from jail and then suddenly reappear, as if by “magic,” somewhere else. In many science fiction novels, a favorite device is the “teleporter,” which allows people to be sent across vast distance in the blink of an eye. A more sophisticated teleporter would be a device that would allow someone to leap into a higher dimension and reappear somewhere else.

Our minds, which conceptualize objects in three spatial dimensions, cannot fully grasp higher-dimensional objects. Even physicists and mathematicians, who regularly handle higher-dimenisonal objects in their research, treat these objects with abstract mathematics rather than trying to visualize them. However, given the analogy with the Flatlanders, there are tricks we can use to visualize higher-dimensional geometric objects such as hypercubes.

The concept of a three-dimensional cube would be alien to the Flatlanders. However, there are at least two ways in which we could convey to them the concept of a cube. First, if we were to unravel a hollow cube, we would, of course, unfold a series of six squares, which can be arranged, say, in the shape of a cross. For us, it is obvious that we can simply rewrap these squares into the shape of a cube. For the Flatlander, this is impossible. Similarly, a higher-dimensional being could convey to us the concept of a hypercube by unraveling it until it becomes a series of three-dimensional cubes, called a tesserack.

(Perhaps the most famous illustration of a tesserack is found in Salvador Dali's painting of the crucifixion of Christ, which is on display at the Metropolitan Museum of Art in New York. In the painting Mary Magdalene is looking up at Christ, who is suspended in midair in front of a series of cubes arranged in the shape of a cross. Upon close inspection, one can see that the cross is not a cross at all, but rather an unraveled hypercube.)

There is yet another way in which the concept of a cube could be conveyed to a Flatlander. If the edges of the cube are made of sticks, and the cube is hollow, we could shine a light on the cube and have the shadow fall upon a two-dimensional plane. The Flatlander would immediately recognize the shadow of the cube as being a square within a square. If we rotated the cube, the shadow of the cube would perform geometric changes that are beyond the understanding of the Flatlanders. Similarly, the shadow of a hypercube whose sides consist of sticks appears to us as a cube within a cube. If the hypercube is rotated, we see the cube within the cube executing geometric gyrations that are beyond our understanding.

In summary, higher-dimensional beings can easily visualize lower-dimensional objects, but lower-dimensional beings can visualize only sections or shadows of higher-dimensional objects."

|

|